You know the favorite question of 4-to-10-year-olds: “Why is the sky blue? Why do I have to eat broccoli? Why shouldn’t I cross the street when the light is red?” That childlike curiosity, which drove us to try and understand the world around us, seems to be nothing more than a distant memory.

Who hasn’t, at some point, answered a question with, “Hold on, let me ask ChatGPT”? The advent of Generative AI has deprived us of a fundamental element that makes us human: the right not to know. And because it is never comfortable to be in a situation where we have to admit we don’t have the answer, it has become almost natural to rely on AI to get us out of a jam.

The Convenience of Not Knowing

So we no longer ask “why”; instead, we query the AI and trust it blindly. Yet, this simple question should be at the very heart of our use of AI. With every interaction with this technology, our first instinct should be to ask: Why am I getting this result?

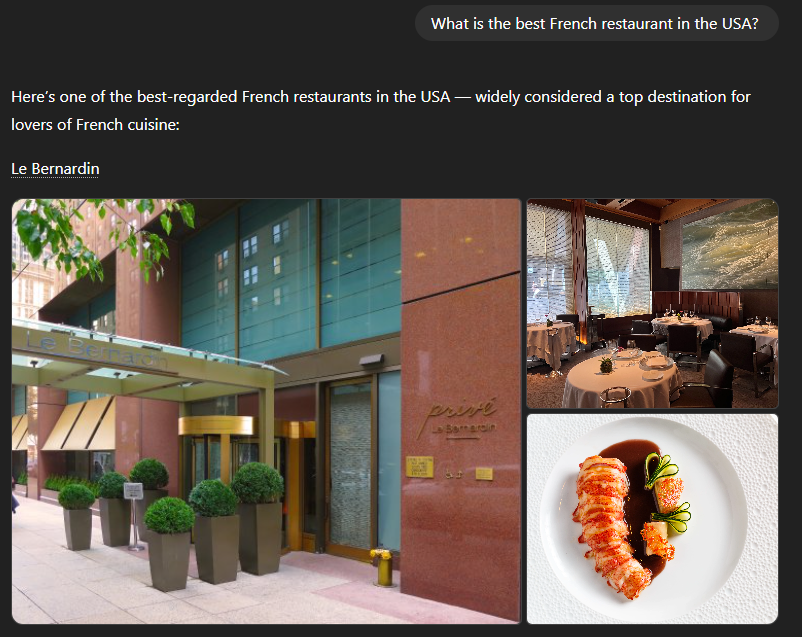

For example, if I ask ChatGPT, “What is the best French restaurant in the United States?” it answers, “Le Bernardin.”

Aside from the fact that I’ve never heard of this restaurant (perhaps you have; I’m not a gastronomy expert, after all), I have no idea where this recommendation comes from. Why this answer? Has ChatGPT tasted the chef’s dishes? Certainly not. Did it consult rankings, user reviews, press articles? I have no idea.

The “Black Box” Problem

And that is exactly the problem with the majority of mainstream artificial intelligence models we use today. We have no idea how they are built, what information they use to generate their answers, or what influences them most in choosing one word over another. In short, they are “Black Boxes.”

In itself, not knowing every secret of a tool developed by a private company might be considered normal. But when these tools concretely influence our lives without us knowing exactly how or why, then it is high time to sound the alarm.

“With every interaction with this technology, our first instinct should be to ask: Why am I getting this result?”

When the Stakes Are High

So yes, not knowing why ChatGPT recommends one restaurant over another isn’t going to change my life. But in fields like healthcare, justice, or finance, the stakes are completely different. What can we concretely do in those cases? Artificial Intelligence is a technology with incredible potential, and I genuinely believe it can improve our lives. That is precisely why I chose to join the Tomorrow University MBA program and pursue a specialization in “Sustainable AI & Emerging Technologies.”

The Solution: Explainable AI (XAI)

The more people have access to AI, and the more they understand this technology, the better equipped they will be to create positive and impactful solutions for society. To achieve this, we must make AI transparent and understandable. That is why, as part of my studies, I have decided to dive into the world of “Explainable AI” or “xAI”. I want to return to that time when we questioned everything. I want to reach a point where I don’t have to wonder why an AI gives me one answer instead of another, because the AI itself will be able to precisely justify its reasoning. I want to be able to ask “Why?”.

Join the Journey

Through this website, I invite you to embark on this journey with me to discover Explainable AI. We will try to understand what lies behind this concept, the existing solutions, concrete applications, and more. Above all, we will attempt to define a future in which we are enlightened users of AI. If this topic fascinates you, be sure to bookmark this site. And if you ever wish to contribute, or simply help me understand the subject better, I invite you to contact me.